Goals of the project

The Trinity Firefighting Competition is a robotics competition in which participating teams build autonomous, maze-navigating robots capable of finding a fire and putting it out. The competition tests the ability of a team, built up of mechanical, hardware, and software engineers, to work together to build a robot capable of advanced autonomous activity. For the Tufts Robotics club, it is a chance to put to the test the skills we have acquired during the preceding portion of the school year.

Nature of the Collaboration

Late nights were spent in Bray Labs finishing the robot in preparation for the competition. The group split into smaller teams each tasked with designing a portion of the robot. Fire sensors were selected by the electromechanical team and were handed over to the programmers to develop a fire sensing algorithm.

Skills

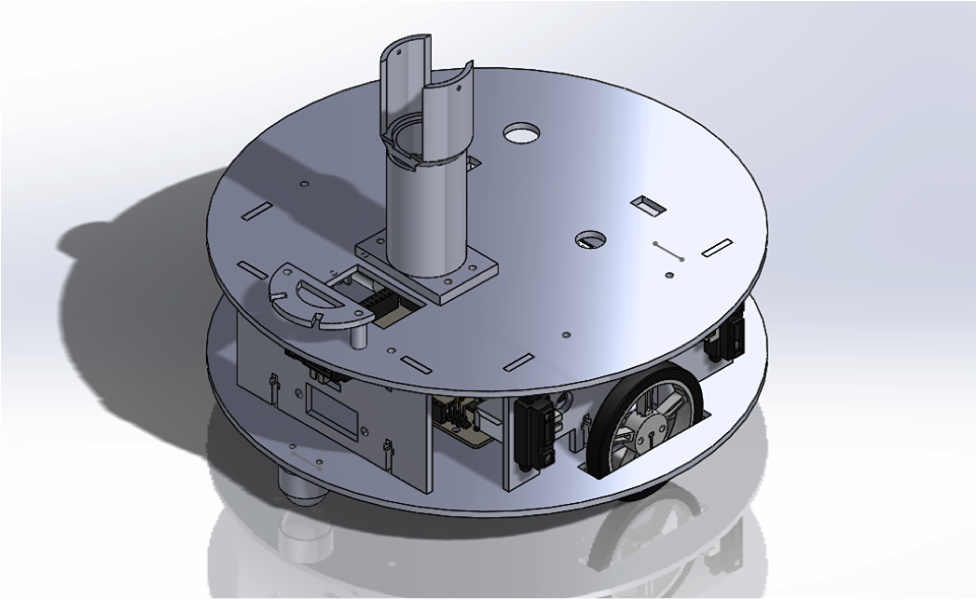

Designing robot in SolidWorks – The robot was designed in SolidWorks. Was made up of two pieces of laser-cut acrylic spaced by other acrylic pieces.

3D Printing – it was necessary to 3D print certain parts for the robot, such as the casing which held the CO2 canister, used to put out the fire.

Programming – At the robot’s core was an Arduino. Software was written and organized into neat libraries for the project. There was a focus on modularity so that code for the fire sensors, for example, could be reused in another project.

Control algorithms – To accurately coordinate sensing and driving, several feedback loops were incorporated into the program. The robot drove at an angle dictated by the angle measured by its two side-facing distance sensor such that if the robot was too far away, it would steer toward the wall; too close, and it would steer away. This causes the robot to reach a stable middle ground. There were also controls involved in steering toward the fire. The fire sensing array provides the angle the fire is sensed at. An angle of zero indicates it is straight ahead. The robot drove at an angle proportional to the angle the fire was sensed at such that it always steers toward the fire.

Tools

Laser cutting – Used a laser cutter to cut out acrylic that made up the robot body.

Selecting and wiring up sensors – Sensors were an integral part of this project, as they allowed the robot to sense its way through a maze and ultimately find a candle. We used many distance sensors as well as a fan array of fire sensors to home in on the fire.

Arduino – An Arduino was at the center of the robot. This competition put to the test all the skills club members had accrued in meetings previous.

3D printer – Parts that could not be made from acrylic were 3D printed

Process

We began with the design of the physical robot in SolidWorks. The design was informed by previous iterations of Tufts’ Trinity Firefighting robot. For example, in previous year, we had used an IR sensor with a cone around it to sense fires, though this proved ineffective. This year, we purchased specially-made flame sensors and made an array of them, which greatly improved our sensing capability. The robot was made perfectly round, so as to avoid catching corners when navigating the maze (as had happened in the past). Once the body of the robot was fabricated, it was time to wire everything up. This was done in not much time. From there, the group worked together on devising a control scheme for maze navigation and fire sensing. The groundwork was quickly laid, and from there our work was mainly in fine-tuning these controls.

Milestones

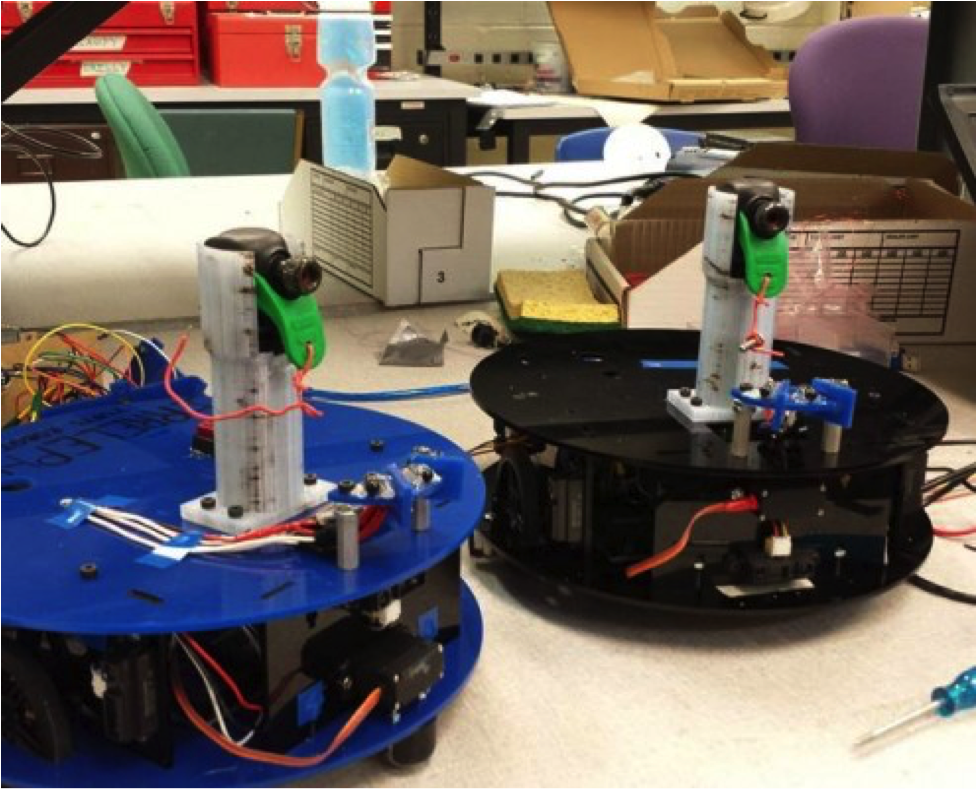

We created two robots for this competition, named “Irrelephant” and “De-Lux”, the first of which was designed to be more aggressive, and the second which played it safer. Again, this project was informed by the flaws of previous years’ designs. One major milestone was the development of an effective wall-following algorithm. This improved our maze-solving capability greatly. Never again did we lose track of the wall. Another milestone was, of course, the first time we put out a candle starting from the beginning of the maze. This was the first sign that our hard work put into fine-tuning was paying off.

Challenges encountered

One issue was that, during the competition, the robot drives on a black floor and searches for a white line. Our space’s floor is white, so our only option was to put down a black line. We solved this issue by placing a variable in the code to switch between searching for white on black, and searching for black on white. This taught us to have a good debugging framework in place ahead of issues. In the end we had many “switches” at the top of our code that allowed us to test many different aspects of the code simply by changing a 0 to a 1.

We also maintained a Git repository for the duration of the project, which allowed us to version control our code, meaning that if something gets changed and the whole system breaks, we can easily revert to an old version of the code as a failsafe. On a project that involves so many people poking at the code at once, it is important to maintain a strict system that will maintain the code’s integrity. This was many people’s first experience using Git, and it is a very useful skill for any project like this.

Major outcomes

We did not take home the gold from the competition, though we did win the Senior Team Olympiad (a quiz on engineering knowledge) for the 4th year in a row! Our robot did respectably. It found the candle 2 out of 3 times – it’s one failure was due to a screw being too loose. This loss taught us to design the system in a more foolproof way, so that even if a screw has not been tightened, the mechanism will still function. That’s just one more lesson for next year.

We also won the smallest robot award at the competition! We felt proud that we were able to condense a robot with all the sensing capabilities necessary into such a compact package.

Innovations, impact and successes

The fire sensing array was definitely the best innovation for this year’s robot. We will definitely be reusing it in future years. The sensors themselves were very accurate, and the way we arranged them (in a fan shape) was quite novel and provided us with precisely the information we needed – the necessary heading from the robot’s current position in order to get to the fire.

Quinn Wongkew

Quinn Wongkew

Riley Wood

Riley Wood

Brook Nichols

Brook Nichols

Zack Pagel

Zack Pagel